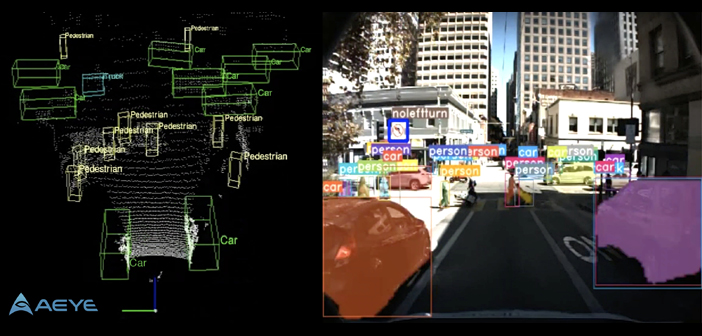

Artificial perception specialist AEye has announced what’s claimed to be the world’s first commercially available, 2D/3D perception system designed to run in the sensors of autonomous vehicles.

For the first time, basic perception can be distributed to the edge of the sensor network. This enables autonomous designers to use sensors to not only search and detect objects, but also to acquire, and ultimately to classify and track these objects. AEye adds that the ability to collect this information in real time both enables and enhances existing centralized perception software platforms by reducing latency, lowering costs and securing functional safety.

This in-sensor perception system is intended to accelerate the availability of autonomous features in vehicles across all SAE levels of human engagement, allowing auto makers to enable the right amount of autonomy for any desired use case – including the most challenging edge cases. In essence, it provides autonomy ‘on demand’ for ADAS, mobility and adjacent markets.

AEye reveals that its achievement is the result of its flexible iDAR platform that enables intelligent and adaptive sensing. The iDAR platform is based on biomimicry and replicates the elegant perception design of human vision through a combination of agile lidar, fused camera and artificial intelligence.

It is the first system to take a fused approach to perception, leveraging iDAR’s Dynamic Vixels, which combine 2D camera data (pixels) with 3D lidar data (voxels) inside the sensor. This software-definable perception platform allows for disparate sensor modalities to complement each other, enabling the camera and lidar to work together to make each sensor more powerful, while providing “informed redundancy” that ensures a functionally safe system.

AEye believes its approach solves one of the most difficult challenges for the autonomous industry as it seeks to deliver perception at speed and at range: improving the reliability of detection and classification, while extending the range at which objects can be detected, classified and tracked. The sooner an object can be classified, and its trajectory accurately forecasted, the more time the vehicle has to brake, steer or accelerate in order to avoid collisions.

“We believe that the power and intelligence of the iDAR platform transforms how companies can create and evolve business models around autonomy without having to wait for the creation of full Level 5 Robotaxis,” said Blair LaCorte, president of AEye.

“Auto makers are now seeing autonomy as a continuum and have identified the opportunity to leverage technology across this continuum. As the assets get smarter, OEMs can decide when to upgrade and leverage this intelligence. Technology companies that provide software-definable and modular hardware platforms now can support this automotive industry trend.”

AEye’s iDAR software reference library will be available in Q1 2020.